Serverless Genomics

Using WebAssembly and Cloudflare Workers to power genomics analysis

It sometimes feels like WebAssembly was made for genomics: A lot of bioinformatics tools are written in C/C++/Rust, and it is increasingly common for web apps to perform more of the analysis in the browser.

While it makes sense to run WebAssembly in the browser to analyze local data, what if that data is in the cloud? We don’t want web apps to download multi-gigabyte files just to analyze them in the browser, but at the same time, common cloud architectures for data analysis (Spot VMs, AWS Batch, etc.) get expensive real fast for tool builders.

Enter Serverless Functions

For some applications, using serverless functions to process data could be a more cost-effective and scalable approach (TL;DR of serverless functions is: you provide code to a cloud provider and, in return, you get an API endpoint that runs your code on-demand and scales it for you).

In this article, we’ll explore using Cloudflare to that end. As we’ll see, Cloudflare Workers are particularly well-suited as they feature 0 ms initialization time (i.e. no “cold starts”), run at the edge (i.e. in a data center close to your users), and have a pricing model that doesn’t require a degree in quantum physics to understand :)

Time to build something!

As a concrete example, let’s build an API that simulates DNA sequencing data. To power those simulations, I compiled the existing tool wgsim from C to WebAssembly using biowasm. Next, wgsim needs a reference DNA sequence as a starting point, on which it applies random modifications to simulate errors introduced during the experiment; we’ll use the human genome reference hosted on the public S3 bucket of the 1,000 Genomes Project. Here’s a schematic of the architecture:

The steps are:

- The user makes an API call, specifying genomic coordinates of interest.

- Given those coordinates, the API only needs to fetch a subset of the 3 GB reference file. To determine which byte range to download, we use the index file, which maps chromosomes to byte offsets.

- As we stream data from S3 into our Worker, we run

wgsim.wasmrepeatedly and stream the simulation results to the user as they are computed.

Since Cloudflare Workers are limited to 128 MB of RAM (for now!), streaming data is a great way to keep memory consumption in check.

The end result

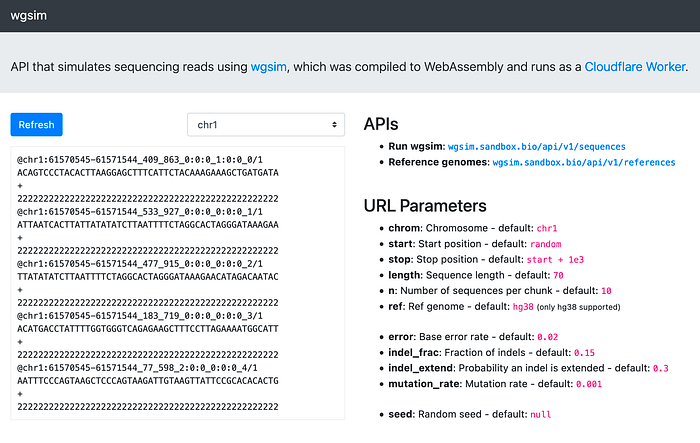

Here’s the API that generates random sequences of DNA on chromosome 1: https://wgsim.sandbox.bio/api/v1/sequences. Try refreshing the page — yes, it really is that fast.

You can also tweak the simulation parameters. For example, to simulate 50bp reads from chr1:1,000,000–1,001,000 with a mutation rate of 0.5: https://wgsim.sandbox.bio/api/v1/sequences?chrom=chr1&start=1e6&stop=1.01e6&mutation_rate=0.5&length=50.

For a user-friendly GUI, check out wgsim.sandbox.bio. The source code for the API and GUI is available at https://github.com/robertaboukhalil/wgsim-sandbox.

Depending on the parameters you provide, you may notice that API calls will last for up to ~30 seconds. Although Cloudflare Workers previously had a CPU usage limit of 50 ms, the API above is powered by Cloudflare Workers Unbound, which is currently in beta and offers up to 30 seconds CPU time, though we might see that limit go up over time.

Why Cloudflare Workers?

On top of the usual benefits of a serverless architecture (scalability, pay-per-use, etc) and support for JavaScript/WebAssembly serverless functions, Cloudflare Workers feature no cold starts, which is important for short-lived functions (in traditional serverless functions, cold starts are in the 100’s of milliseconds if not seconds).

Workers are also powered by the Chrome V8 engine, i.e. we’re still running WebAssembly in a browser — that browser just happens to be in the cloud! Practically, this means we can execute the same .wasm file in the browser and in serverless functions, which makes it a lot easier to build web apps that can analyze data both locally and in the cloud.

And if you need a scalable key-value store, there’s Worker KV, which is great for read-heavy applications. For example, for Ribbon, we use Worker KV to store permalinks created by users without needing to manage and scale a database.

Overall, my experience with Cloudflare Workers has been fantastic — the tooling around deploying and debugging is great, pricing is transparent and predictable, and most of all, it Just Works™.

Conclusion

Here we explored one example of compiling a genomics tool to WebAssembly and running it on demand, backed by a scalable and cost-effective API. Granted, we won’t be assembling genomes in serverless WebAssembly functions anytime soon, but this architecture can be a good fit for certain types of genomics analysis.

Here are a few potential applications (disclaimer: genomics jargon ahead):

- Samtools API: an API that lets you retrieve subsets of remote .bam files by running samtools as an API. We generally can’t do this in the browser because of CORS: browsers don’t let you make HTTP requests to different domains unless those domains explicitly allow it. For example, files stored in cloud storage are not CORS-enabled by default, so this API would help address that limitation. I’m interested in this application because we could start using it for apps like Ribbon, where it would replace the server we currently use for parsing remote .bam files.

- Sequence alignment-as-a-service: an API that runs bowtie2 (for now, the reference genome would need to fit in 128 MB of RAM). This won’t work for all use cases, but I imagine it could be a good fit for applications like single-cell sequencing, where the number of reads per cell is small. After all, who doesn’t love launching millions of API calls in parallel? /s

- Streaming k-mer analysis: an API that streams data from a cloud storage bucket and runs k-mer analysis on the fly.

I hope you enjoyed this article, and I’d love to hear from you if you have other ideas for how serverless genomics could be used.

📘 If you enjoyed this article and are looking for a practical guide to WebAssembly, check out my book Level up with WebAssembly.